The Real Problem: Observability Without Operational Intelligence

Your monitoring stack produces telemetry. Logs stream at 400GB per day. APM tools track latency across 18 microservices. Infrastructure metrics flood dashboards every 15 seconds. You have observability, comprehensive visibility into system state.

What you do not have is operational intelligence: the ability to determine what matters right now, why it is happening, and what prevents it from recurring.

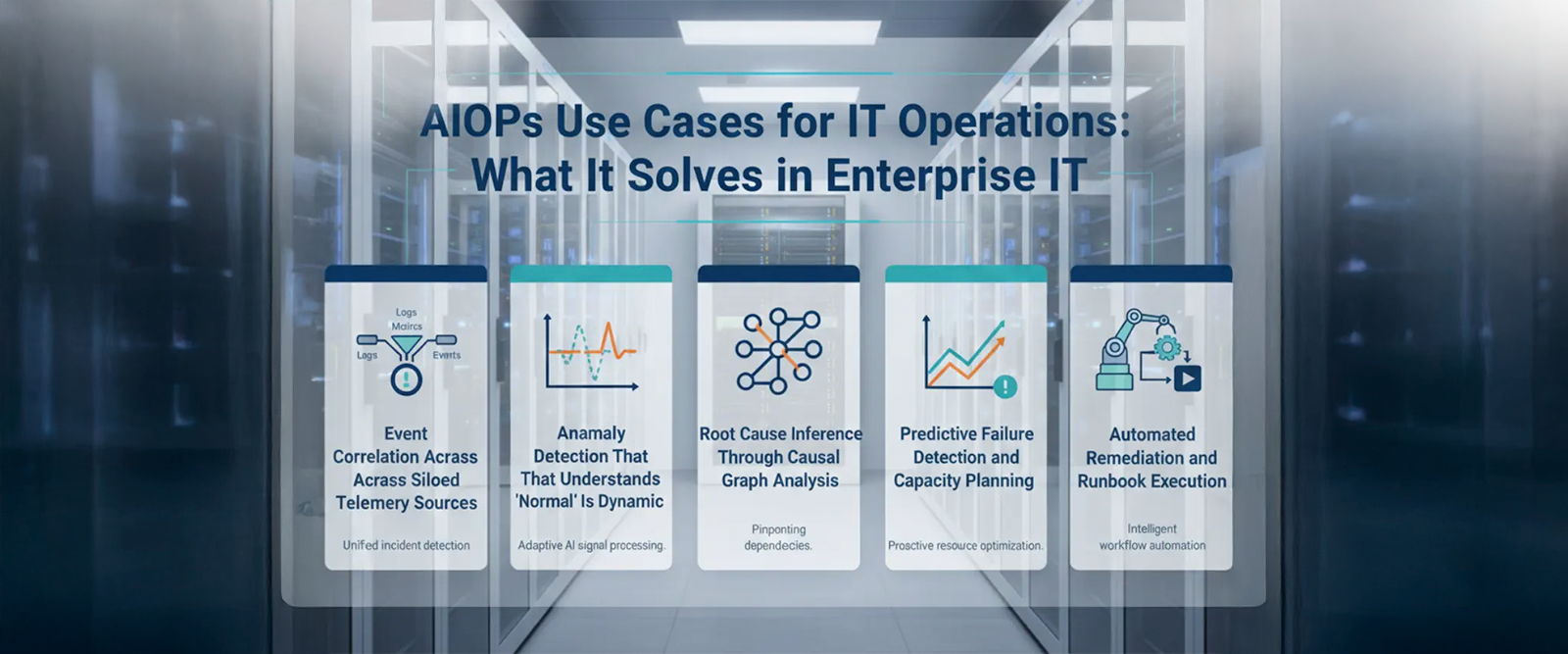

The delta between these two states is where Indian enterprises lose hours during incidents and why the same database connection pool exhaustion happens quarterly. Monitoring tells you a threshold broke. AIOps tells you the payment gateway is down because the authentication service scaled unevenly after the 2 AM deployment, and this is the third time this specific dependency chain has caused customer impact. That distinction, between alert volume and decision-grade intelligence, is what AIOps platforms are built to close. Here are five proven use cases, delivered through iStreet Network’s Resilient Operations solutions powered by HEAL Software, that demonstrate how.

Use Case 1: Event Correlation Across Siloed Telemetry Sources

The operational gap: A checkout failure in production triggers 47 alerts across monitoring and your ITSM tool within 90 seconds. Your on-call engineer receives notifications from three channels simultaneously. Which alert represents the root cause? Which seventeen are symptoms? The engineer spends 12 minutes correlating timestamps, tracing dependencies manually, and ruling out false positives before even starting remediation.

What AIOps solves: The correlation engine ingests events from heterogeneous sources, normalises timestamps and metadata, then applies temporal and topological analysis to correlate related alerts into a single incident with ranked probable causes. The same 47 alerts become one incident tagged with ‘API gateway timeout’ as primary signal and ‘upstream database saturation’ as contributing factor.

Topology awareness is critical here. The AIOps platform maintains a service dependency graph — often learned from traffic patterns rather than manually configured, so when an alert fires in the authentication layer, the correlation engine already knows which downstream services will cascade failures. Alerts are not just grouped by time proximity; they are weighted by dependency relationships and historical co-occurrence patterns.

Measurable outcome: Enterprises typically see alert noise reduction between 60 and 85% after correlation tuning. More importantly, MTTR drops by 30 to 50% because engineers start diagnosis with a ranked hypothesis rather than raw alert lists.

Why it matters to your IT team: You already paid for observability tools. AIOps makes that investment operationally useful by turning signals into decisions. Every incident that resolves 20 minutes faster is customer impact you avoided and engineering capacity you reclaimed.

Use Case 2: Anomaly Detection That Understands Normal Is Dynamic

The operational gap: Static thresholds fail in elastic infrastructure. CPU at 75% is normal during batch processing windows but critical during checkout traffic. A human-tuned alert either fires constantly creating alert fatigue or misses genuine degradation, leading to missed SLA breaches. Your team adjusts thresholds quarterly, but seasonal traffic patterns and feature releases keep invalidating those baselines.

What AIOps solves: Behavioural anomaly detection builds dynamic baselines per metric, per service, per time window. Machine learning models learn that Friday evening API latency sits at p95 of 240ms while Tuesday morning runs at p95 of 95ms, and both are normal. When Friday evening latency hits 320ms, the model flags deviation from expected behaviour — not deviation from a static number.

Context matters profoundly. The AIOps platform correlates anomalies with deployment events, infrastructure changes, and external dependencies. If cloud storage latency spikes and your upload service simultaneously shows increased error rates, the anomaly detector surfaces both signals together with the probable causal relationship — not as two separate, unconnected incidents.

Advanced implementations use unsupervised learning to detect unknown failure modes: patterns that do not match any historical incident but deviate from learned normal behaviour. This catches novel degradation — the kind your runbooks have not documented yet — giving your team early warning for genuinely new types of failures.

Measurable outcome: Reduction in false positive alerts by 40 to 70% as dynamic baselines replace static thresholds. Earlier detection of capacity exhaustion and performance degradation typically minutes to hours before threshold-based alerts would fire, and critically before customer-facing impact occurs.

Why it matters to your IT team: False positives erode on-call engineer trust and response speed. When 70% of pages are noise, your team stops treating alerts as urgent, the ‘boy who cried wolf’ effect. Dynamic anomaly detection restores signal quality, which directly improves incident response discipline and on-call team morale.

Use Case 5: Automated Remediation and Self-Healing

The operational gap: Your team documents remediation procedures for known failure modes. Runbooks exist for database failover, cache clearing, pod restarts, and traffic rerouting. But incident response still requires an engineer to diagnose the problem, locate the correct runbook, execute steps manually, and verify resolution. For a known issue with a documented fix, you are still paying 15 to 20 minutes of human latency plus on-call interruption cost.

What AIOps solves: Closed-loop automation connects incident detection to remediation execution. When the platform identifies a known failure pattern database connection saturation matching historical signature it triggers predefined remediation workflows. The system executes the runbook: scales connection pool capacity, terminates long-running queries, alerts secondary on-call if remediation fails.

The critical distinction from simple automation is conditional execution based on diagnostics. The AIOps platform does not just trigger scripts on alerts; it assesses incident classification certainty, verifies remediation prerequisites, and halts if conditions do not match expected patterns. This prevents automation from amplifying novel failures a critical safety requirement in regulated environments.

Integration with collaboration platforms closes the loop. Automated remediation posts actions taken, metrics affected, and verification results directly into incident channels, so on-call engineers maintain visibility even when automation handles resolution. The audit trail is complete and compliance-ready.

Measurable outcome: MTTR improvement of 40 to 60% for known, automatable incident classes. On-call interruption reduction by 30 to 45% as low-severity, repetitive incidents resolve automatically. Most critically, consistent remediation quality automation does not skip steps or misapply fixes under pressure.

Why it matters to your IT team: Engineer time is your scarcest operational resource. Automated remediation reallocates that capacity from repetitive incident response to preventive reliability work. On-call quality improves fewer pages for issues that should resolve automatically which directly impacts retention of senior operational talent, a critical concern in India’s competitive technology talent market.

The Implementation Reality: Not Plug-and-Play

The AIOps platform requires operational investment before delivering operational intelligence. The technology is not turnkey; it is a capability layer that demands tuning, training data, and integration work.

Topology discovery takes time. Accurate service dependency mapping requires weeks of traffic analysis or manual configuration. Without correct topology, correlation produces groupings that do not reflect actual causal relationships. Model training needs historical data — anomaly detection requires 30 to 90 days of baseline data per metric to learn normal behaviour patterns. Seasonal businesses need full-year data sets to capture cyclical patterns. Initial deployment accuracy is lower until models stabilise.

Integration complexity scales with tool sprawl. If you are ingesting from twelve different monitoring systems, each integration requires API mapping, authentication configuration, and data normalisation. False positive tuning is iterative — initial deployments often over-alert or misclassify incidents, requiring operational feedback loops over multiple incident cycles.

The enterprises that succeed treat AIOps deployment as a 6 to 12 month operational maturity programme, not a software installation. Start with a single high-noise domain — your Kubernetes infrastructure, your API gateway layer, or your core banking platform — tune correlation and anomaly detection there, prove value, then expand coverage.

When AIOps Solves Actual Problems

AIOps makes sense when you have specific operational pain that observability alone cannot fix. You are drowning in alerts correlation delivers immediate value. Repeat incidents consume disproportionate time; causal inference addresses that gap. Your infrastructure outgrew static monitoring dynamic anomaly detection restores signal quality. You have documented runbooks that are not executed consistently automation standardises execution.

AIOps does not solve observability gaps it solves operational intelligence gaps. If you cannot answer ‘what is happening right now’ because you lack telemetry coverage, buy better monitoring first. If you can answer ‘what is happening’ but cannot answer ‘why it matters, what caused it, and how we stop it recurring’ that is where iStreet Network’s Resilient Operations solutions create transformative value.

Talk to our advisors to assess where AIOps delivers the highest impact in your environment.

Originally inspired by insights from HEAL Software, an iStreet Network AIOps product. Learn more at healsoftware.ai.